How AI-Powered Lead Scoring Can Transform Your Sales Pipeline

Most sales teams still score leads by gut feel. Here's what happens when you replace that with ML-based scoring, and how to actually get it running in your CRM.

Founder & CEO, Airful

I spent a good chunk of last year watching a client's sales team work through a spreadsheet of 2,000 leads, manually tagging each one as "hot," "warm," or "cold" based on gut instinct and a few data points they could eyeball. They had eight reps. Each one had a slightly different definition of "hot." The result was predictable: wasted calls, missed opportunities, and a pipeline that looked full but converted at under 4%.

That experience pushed us to build an ML-based lead scoring system for them. Within three months, their conversion rate doubled. Not because the leads got better — because the reps finally knew which ones to call first.

Here's what we learned, stripped of the hype.

What traditional lead scoring actually looks like

Most companies score leads using some version of a point system. Download a whitepaper? +10 points. Visit the pricing page? +15. Job title is VP or above? +20. These rules are written once, usually by someone in marketing ops, and then left untouched for months or years.

The problem isn't that point systems are stupid. They're a reasonable first approximation. The problem is they're static. They can't adapt to changing buyer behavior, and they treat every signal as independent when signals actually interact. A VP visiting your pricing page after attending a webinar is a very different signal than a VP visiting pricing because they clicked a retargeting ad by accident.

How ML-based scoring works differently

Machine learning models look at all the data together. Instead of manually assigning point values, you feed the model historical data — every lead you've ever had, what they did, and whether they eventually converted — and the model figures out which patterns actually predict conversion.

The model might discover things your team would never hard-code. One client found that leads who visited their integration docs page within 48 hours of signing up for a free trial converted at 6x the baseline rate. Nobody on the team had ever thought to track that specific sequence.

There are three main categories of signals these models consume:

Behavioral data

This is what the lead does. Page visits, email opens, content downloads, product usage (if applicable), webinar attendance, chat interactions. The sequencing and timing of these actions matters as much as the actions themselves.

Firmographic data

Company size, industry, location, tech stack, funding stage, growth rate. A 50-person SaaS company in growth mode is a different prospect than a 50-person local services company, even if their engagement looks identical.

Engagement patterns

This is the meta-layer — how frequently someone engages, whether engagement is accelerating or decaying, whether multiple people from the same account are active. A single champion browsing your site is different from three people at the same company all reading case studies in the same week.

Getting from theory to production

The gap between "this sounds great" and "this is running in our CRM" is where most lead scoring projects die. Here's a practical path through it.

Step 1: Audit your data

Before you touch any model, look at what you actually have. Pull your last 12 months of closed-won and closed-lost deals. Do you have clean records of what those leads did before they converted or didn't? If your CRM data is a mess — missing fields, inconsistent tagging, no activity tracking — fix that first. No model will compensate for bad data.

Specifically, check for consistent lifecycle stage definitions, reliable activity timestamps, complete contact records on closed deals, and accurate revenue attribution. If any of those are spotty, spend a few weeks cleaning up before moving forward.

Step 2: Pick your features

Features are the inputs your model will use. Start with what's easy to capture and known to be predictive: days since last engagement, total page views in past 30 days, number of form submissions, company employee count, industry category.

Resist the temptation to throw in every data point you have. More features doesn't mean better predictions. It often means overfitting — the model memorizes noise in your training data instead of learning real patterns. Start with 15-20 features and expand only when you have evidence a new feature improves performance.

Step 3: Train and validate

Use your historical data to train the model. Split it 80/20 — train on 80%, validate on 20%. The metric that matters most isn't accuracy (which is misleading when your conversion rate is low) but precision and recall at the thresholds you care about. Specifically: of the leads the model flags as "high intent," what percentage actually convert? And of the leads that did convert, what percentage did the model catch?

For most B2B pipelines, a gradient boosting model (XGBoost or LightGBM) will outperform simpler approaches without requiring the infrastructure overhead of deep learning.

Step 4: Integrate with your CRM

The model needs to score leads in real time and surface those scores where reps actually work. That means pushing scores into your CRM — whether that's HubSpot, Salesforce, or something else — as a visible field on the lead record. (If your CRM isn't in good shape yet, read why most CRM implementations fail before bolting on scoring.) Ideally, it also triggers workflows: high-scoring leads get auto-routed to senior reps, medium-scoring leads get nurture sequences, low-scoring leads get deprioritized.

Don't bury the score in a dashboard nobody checks. Put it on the lead record, in the rep's daily task list, and in the routing logic.

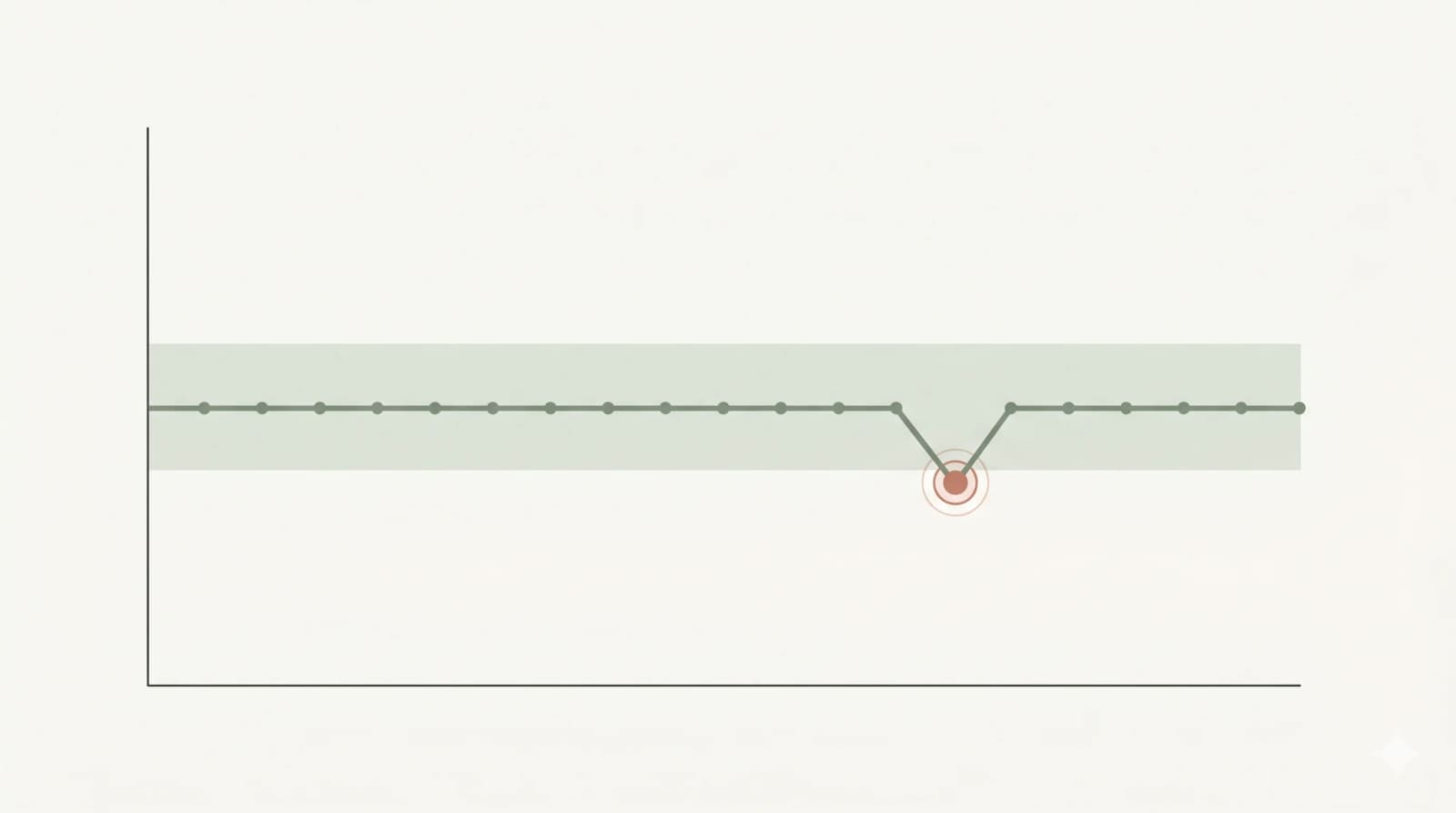

What realistic results look like

Let me be specific about outcomes we've seen across multiple implementations:

- Sales cycles shortened by 15-25%. Reps spend less time qualifying and more time selling to people who are actually ready to buy.

- Conversion rate improvements of 30-80%. The range is wide because it depends on how bad the baseline was. If your reps were basically guessing before, the improvement is dramatic.

- Rep productivity up 20-40%. Not because reps work harder, but because they waste fewer hours on leads that were never going to close.

- Marketing and sales alignment gets better too. When both teams trust the scoring model, the "these leads are garbage" argument goes away. You can have a data-backed conversation about lead quality instead.

Pitfalls that will sink your project

Training on biased data. If your reps historically ignored certain lead segments, your model will learn that those segments don't convert — not because they wouldn't, but because nobody ever tried. Watch for selection bias in your training data.

Set-and-forget deployment. Markets change. Buyer behavior shifts. Your model needs retraining on a regular cadence — quarterly at minimum. Build this into your ops process from day one.

Ignoring the humans. If your sales team doesn't understand or trust the scores, they'll ignore them. Involve reps in the validation phase. Show them examples of high-scored leads that converted and low-scored leads that didn't. Let them poke holes in the model. Their buy-in matters more than a few percentage points of model accuracy.

Overcomplicating the initial build. Your first version doesn't need real-time scoring, a custom model, and a full feedback loop. Start with batch scoring, a pre-built algorithm, and manual review. Iterate from there.

Where to start

If you're considering this, here's a straightforward checklist:

- Confirm you have at least 500 closed deals (won and lost combined) with decent data quality.

- Identify 3-5 data sources you can reliably pull behavioral and firmographic data from.

- Define what "conversion" means precisely — is it a closed deal, a qualified opportunity, a demo booked?

- Pick a single pipeline or segment to pilot with rather than trying to score everything at once.

- Set a clear baseline metric (current conversion rate, average deal cycle length) so you can measure improvement.

- Allocate time for your sales team to participate in validation and feedback.

Lead scoring isn't magic. It's pattern recognition applied to your specific sales data. But when it works — when the model is trained on good data, integrated into real workflows, and trusted by the team — it changes how your pipeline operates. The leads that matter float to the top. The noise falls away. It's one piece of what we build under growth architecture — connecting your CRM, marketing automation, and data layer into a system that gets smarter over time.

Your pipeline shouldn't lie to you. If it looks full but closes empty, the problem is prioritisation — not effort. Let's find out if ML-based scoring can fix that for your team.

Book a Free Discovery SessionRelated Posts

The AEO Playbook: How to Get Cited by AI Answer Engines

Search is being replaced by answers. Here's how to make sure your business gets quoted in ChatGPT, Perplexity, and Google's AI Overviews — based on what actually moved citations for us.

A Practical Guide to Implementing AI in Small Business Operations

AI doesn't require enterprise budgets. Here's a grounded playbook for small and mid-sized businesses — where to start, what to avoid, and how to actually measure whether it's working.

Why Agentic AI Is Becoming Essential for Modern Startup Success

Discover how agentic AI systems are reshaping startup operations through autonomous decision-making capabilities that historically required substantial organizational resources.

Ready for clarity?

Whether you need AI consulting services, marketing automation, or custom software development, we're here to architect systems that create clarity — not complexity. No sales pitch. Just a conversation about your growth goals.